9 CRM Fields You Should Convert from Picklist to Rich Text in 2026

Most picklist fields in your CRM exist because the rich-text version would have been blank 80% of the time. AI removes that constraint. Here are the nine fields to convert first, with the prompts.

9 CRM fields you should convert from picklist to rich text in 2026

Most picklist fields in your CRM aren't picklists because picklists were the right design. They're picklists because the rich-text version of the same field would have been blank 80% of the time. A rep with 90 seconds between calls picks "Other" and moves on.

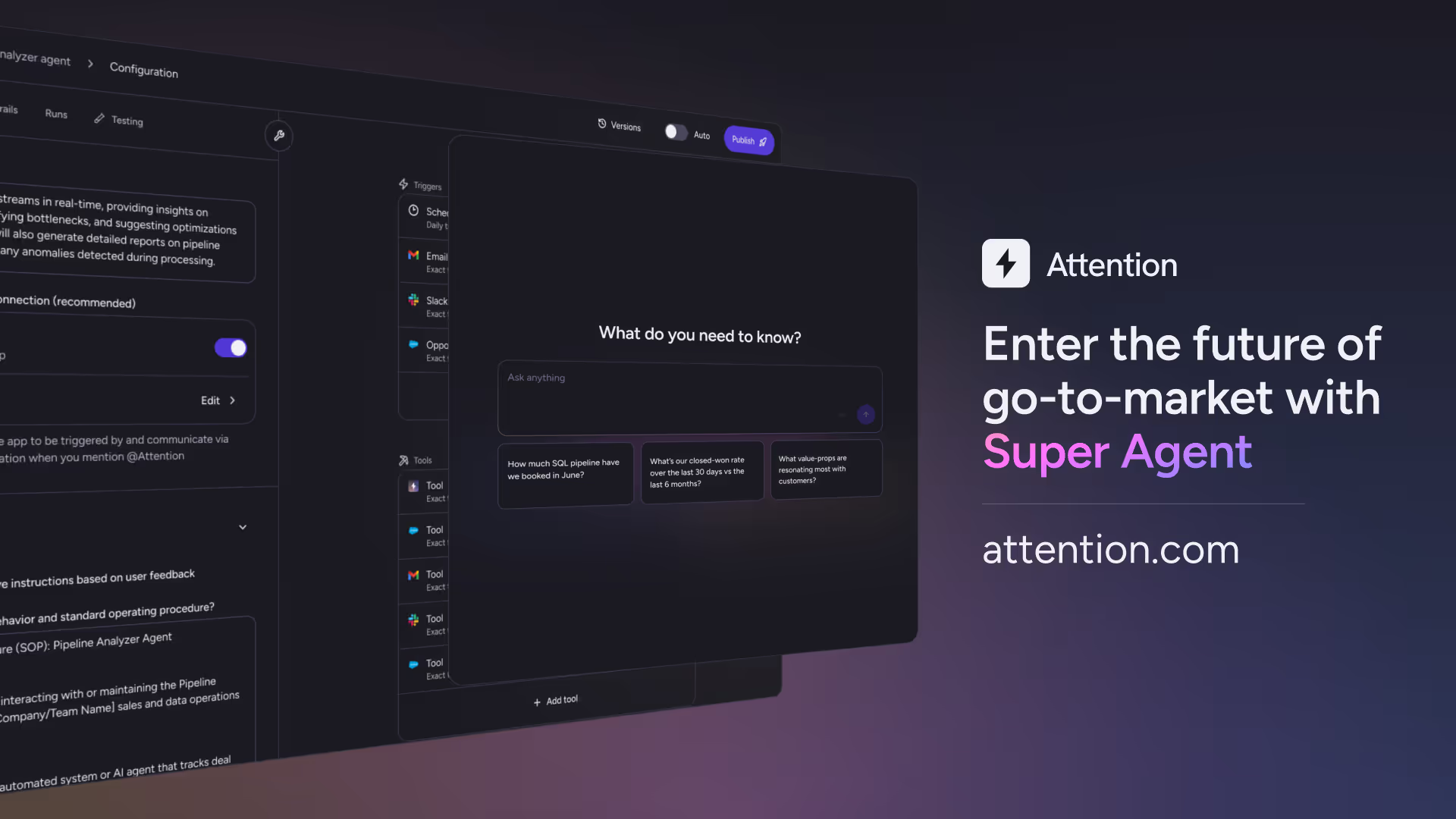

That constraint is gone. AI can now extract a paragraph of context from a sales call transcript and write it directly into a Salesforce or HubSpot field, which means rich-text fields are finally viable for the first time since the CRM was invented. The full case for why this matters is in the anchor essay on this shift. This piece is the tactical companion: the specific fields revenue teams should migrate first, what each rich-text version should capture, and how to write the AI prompt that fills it.

The criteria for picking the nine below: each is a field where the picklist version actively destroys value, where the rich-text version meaningfully improves a downstream agent or workflow, and where the prompt to extract it from a call is straightforward enough to write in a single sentence.

1. Pain Point

Picklist version: a 5-option dropdown with values like Cost, Efficiency, Compliance, Growth, Other.

Why it fails: Pain Point = "Cost" tells you nothing about what the customer actually said. A coaching agent can't reason on it, a forecasting agent can't weigh it, and the next AE who picks up the deal has to re-discover the same context.

Rich-text version captures: the customer's specific articulation of the problem, the trigger event that made it urgent, and any quantification they offered.

AI prompt: "In two to four sentences, summarize the most acute pain point the customer articulated on the call. Include the trigger event if mentioned and any specific cost or impact they quantified."

2. Decision Criteria

Picklist version: Pricing, Features, Integration, Support, Other.

Why it fails: "Decision Criteria = Integration" doesn't tell you which integration in particular the buyer cares about, which past vendor experiences are shaping their evaluation, or which stakeholder on the buying committee holds informal veto power. Those details determine whether you win the deal. The picklist hides them.

Rich-text version captures: the weighted criteria the buying committee actually uses, who controls each one, and any internal constraints that shape the evaluation.

AI prompt: "Summarize the customer's stated decision criteria, weighted by the order they emphasized. For each criterion, note which stakeholder owns it and any internal mandate or constraint they referenced. Maximum 250 words."

3. Next Steps

Picklist version: usually a free-text field that reps fill once and never update, or a picklist of generic stages like "Send Proposal."

Why it fails: Next Steps is the field reps complain about most. Most CRMs treat it as a gate to advance the stage, so it gets filled once at the start of the deal and never updated as the deal evolves. Stale next steps are worse than no next steps because they create false confidence in the pipeline review.

Rich-text version captures: the specific actions agreed to on the call, the owner, and the timing if discussed.

AI prompt: "List the two most important next steps the customer agreed to on this call, with the owner (us or them) and timing if mentioned. Maximum 200 characters per next step."

4. Champion Profile

Picklist version: usually doesn't exist, or exists as a binary "Champion Identified Yes/No."

Why it fails: Yes/No tells you nothing about who the champion is, what they care about, or whether they have the political capital to push the deal across the line. Account-based selling agents in 2026 need the answer to those three questions, not just a checkbox.

Rich-text version captures: the champion's name and role, their stated motivation for backing the deal, the internal stakeholders they need to convince, and any indicators of their influence inside the account.

AI prompt: "Identify the champion on this account. Capture their name and title, the specific reason they want this deal to happen, the stakeholders they have said they need to bring along, and any signals of their internal influence. If no clear champion has emerged, write 'No champion identified.' Maximum 300 words."

5. Competitive Context

Picklist version: a multi-select of vendor names. Competitor = Vendor A, Vendor B, Vendor C, Other.

Why it fails: the multi-select tells you who's in the deal, not what was said about them. A buyer's specific objection — what's working, what isn't, where the gap is — is the actionable part. The picklist throws it away.

Rich-text version captures: every named competitor mentioned, the specific objection or comparison the buyer raised against each one, and any verbatim quote that captures the buyer's framing.

AI prompt: "For each competitor mentioned on this call, capture the buyer's specific objection or comparison, plus a verbatim quote if they offered one. If multiple competitors came up, list each separately. Maximum 350 words total."

6. Mutual Action Plan / MAP Status

Picklist version: a stage value like "Validation," "Proposal Sent," or "Contract Out."

Why it fails: stage doesn't tell you what's actually happening between stages. A deal in "Validation" could be a security review going smoothly, or it could be a stalled procurement process where the AE hasn't been able to reach the buyer in three weeks. The forecasting agent treats both the same way until you change it.

Rich-text version captures: the current state of every workstream the deal depends on (technical evaluation, security review, legal, procurement), the named owner on each, and any blockers.

AI prompt: "Summarize the state of each workstream the deal depends on, drawn from this call and prior calls if relevant. For each, note the current step, the owner on the customer side, and any blocker that has come up. Workstreams to track: technical evaluation, security review, procurement, legal, executive sponsorship. Maximum 400 words."

7. MEDDPICC or MEDDIC Summary

Picklist version: usually a series of separate picklist fields, one per letter, each with a Yes/No or stage value.

Why it fails: MEDDPICC is a framework for capturing nuance, and the picklist version captures none of it. "Economic Buyer Identified = Yes" doesn't tell you whether the AE has confirmed buying authority directly, heard the name from a champion, or guessed based on org chart inference. The forecasting agent can't tell the difference. The deal that closes and the deal that slips look identical in the picklist version.

Rich-text version captures: a paragraph per letter, with the key fact and the source (which call, which stakeholder, what was said).

AI prompt: "For each letter of MEDDPICC, write a one-paragraph summary of what we know, including the source. Letters: Metrics, Economic Buyer, Decision Criteria, Decision Process, Paper Process, Identify Pain, Champion, Competition. If a letter has no information yet, write 'Not yet established.' Maximum 600 words total."

8. Stakeholder Sentiment

Picklist version: a Health Score with values like Green, Yellow, Red.

Why it fails: Yellow is exactly as useful as Other. A churn-detection agent reading "Health Score = Yellow" can't tell you the champion casually mentioned they're interviewing alternative vendors on the last QBR call. The narrative is where the early signal lives.

Rich-text version captures: per stakeholder, what they said about the relationship, the product, and any indicators of expansion or churn risk.

AI prompt: "For each customer stakeholder on this call, summarize their sentiment toward our product and our company. Capture any verbatim quote that signals strong satisfaction, frustration, or churn risk. Note any mention of competitive evaluation, budget pressure, or stakeholder change at the customer's company. Maximum 500 words."

9. Use Case / Implementation Plan

Picklist version: a tag or category like "Sales Coaching" or "Pipeline Inspection."

Why it fails: two customers can both pick "Sales Coaching" and have completely different implementation plans, success metrics, and rollout timelines. When the CSM picks up the account post-close, the picklist tells them nothing about what the customer was actually promised or how success will be measured.

Rich-text version captures: the specific use cases the customer wants to solve, the success metrics they'll use to evaluate the rollout, the implementation timeline they expect, and any constraints they raised.

AI prompt: "Summarize the customer's intended use case for the product, including the specific problems they want to solve, the success metrics they will use to evaluate the rollout, the implementation timeline they expect, and any internal constraints that will shape the project. Maximum 400 words."

How to actually do this in your CRM

The migration is additive, not destructive. The right sequence:

First, audit which of these nine fields already exist in your Salesforce or HubSpot org as picklists. For each one, decide whether the picklist drives a workflow you actually use (stage routing, reportable structure, dashboards) or whether it exists because the rich-text version was historically unrealistic. Keep the ones that drive workflow. Add a parallel rich-text field for the ones that don't.

Second, write the AI prompt for each new rich-text field. The prompts above are starting points. Customize them to your industry, your sales motion, and your team's terminology. The prompt is the contract between the AI and the field, and it pays to spend a few iterations getting it right.

Third, test on historical calls before going live. Attention's field configuration UI lets you preview the AI's output on real call transcripts before any of it writes to Salesforce. If the prompt produces good summaries on five recent calls, it'll produce good summaries on tomorrow's calls.

Fourth, push live and let the auto-fill run. Reps don't have to do anything different. The AI listens, extracts, and writes. The CRM gets the depth it always needed without adding a single minute to anyone's day.

Fifth, point your downstream agents at the new fields. The forecasting agent should read MEDDPICC Summary, not just Stage. The pre-call prep agent should read Decision Criteria narrative, not just the picklist. The churn detection agent should read Stakeholder Sentiment, not just Health Score. Every agentic play in your stack gets meaningfully better when the underlying fields hold context instead of buckets.

The CRM your team uses in 2027 will be drawn on top of fields that didn't exist in 2024, captured at depths nobody could have asked a rep to fill. The migration starts with the nine fields above. Book a walkthrough if you want to see what the configured version of any of them looks like on real call data from your team.

FAQ

Which CRM field should I convert from picklist to rich text first?

The CRM field most teams convert first is Next Steps, because reps already complain about it the most and the rich-text version produces the largest immediate improvement in pipeline review quality. After Next Steps, the highest-leverage candidates are Decision Criteria, Pain Point, and MEDDPICC Summary, because each one feeds directly into forecasting and deal coaching workflows. Attention's field configuration UI lets you write the AI prompt, preview output on historical call data, and roll the change out one field at a time.

Will rich-text CRM fields break my existing Salesforce dashboards?

Rich-text CRM fields don't break existing Salesforce dashboards because the recommended migration is additive, not destructive. Keep the picklists you actually use for stage routing, reportable structure, and required workflows. Add rich-text fields alongside them to capture the narrative context the picklists were never able to hold. Modern reporting tools, including Salesforce's own Tableau-powered analytics, can extract structured signals from rich-text fields on demand, which means the same field that fuels an agentic workflow can also feed a chart at read-time.

How does Attention know what to write in a rich-text CRM field?

Attention's CRM auto-fill is prompt-based. Each rich-text field has a prompt the team configures once, written in plain language: the question to answer, the format constraints, the maximum length. After every sales call, the AI extracts the answer from the transcript and writes it directly into Salesforce or HubSpot. The team owns the prompt, can audit and update it at any time, and can preview the AI's output on real historical call data before pushing changes live.

What happens to picklist fields after we add rich text?

Picklist fields can stay exactly as they are after rich-text fields are added, and most teams keep them. The picklist is still the right design for stage routing, lead source classification, deal type, region, and any reportable structure that drives workflow automation. The rich-text field sits alongside the picklist and captures the narrative context. Many teams configure Attention to write to both: the picklist gets the bucket, the rich-text field gets the paragraph, and the AI handles the data entry for both.

Do I need a separate AI tool or does this work inside Salesforce?

Attention writes directly to native Salesforce and HubSpot fields, including custom objects and rich-text fields, with no separate UI for the rep to learn. The CRM stays the system of record. The AI is the data entry layer that fills it. The same approach works for picklists, multi-select fields, number fields, and free-form rich-text fields, all configured by prompt. Read more about Attention's AI sales agents for the full picture of how this fits into a broader agentic workflow stack.

Ready to learn more?

Attention's AI-native platform is trusted by the world's leading revenue organizations

.avif)